Azure Cosmos DB Sink V2 Connector for Confluent Cloud¶

The fully-managed Azure Cosmos DB Sink V2 connector for Confluent Cloud writes data to a Microsoft Azure Cosmos database. The connector polls data from Apache Kafka® and writes to database containers.

Note

If you require private networking for fully-managed connectors, make sure to set up the proper networking beforehand. For more information, see Manage Networking for Confluent Cloud Connectors.

Features¶

The Azure Cosmos DB Sink V2 connector supports the following features:

Topic mapping: Maps the Kafka topic to the Azure Cosmos DB container.

Multiple key strategies:

FullKeyStrategy: The ID generated is the Kafka record key. This is the default option.KafkaMetadataStrategy: The ID generated is a concatenation of the Kafka topic, partition, and offset. For example:${topic}-${partition}-${offset}.ProvidedInKeyStrategy: The ID generated is theidfield found in the key object.ProvidedInValueStrategy: The ID generated is theidfield found in the value object.TemplateStrategy: The template string used to populate the document with theidfield.

Every record must have (lower case)

idfield. This is an Azure Cosmos DB requirement. See the lower case id prerequisite.

For more information and examples to use with the Confluent Cloud API for Connect, see the Confluent Cloud API for Connect Usage Examples section.

Limitations¶

Be sure to review the following information.

- For connector limitations, see Azure Cosmos DB Sink Connector limitations.

- If you plan to use one or more Single Message Transforms (SMTs), see SMT Limitations.

- If you plan to use Confluent Cloud Schema Registry, see Schema Registry Enabled Environments.

Quick Start¶

Use this quick start to get up and running with the Confluent Cloud Azure Cosmos DB Sink V2 connector. The quick start provides the basics of selecting the connector and configuring it to stream Kafka events to an Azure Cosmos DB container.

- Prerequisites

Authorized access to a Confluent Cloud cluster on Azure.

The Confluent CLI installed and configured for the cluster. See Install the Confluent CLI.

Schema Registry must be enabled to use a Schema Registry-based format (for example, Avro, JSON_SR (JSON Schema), or Protobuf). See Schema Registry Enabled Environments for additional information.

At least one source Kafka topic must exist in your Confluent Cloud cluster before creating the sink connector.

The Azure Cosmos DB and the Kafka cluster must be in the same region.

The Azure Cosmos DB requires an

idfield in every record. See ID strategies for an example of how each of these works. The following strategies are provided to generate the ID:FullKeyStrategy: The ID generated is the Kafka record key. This is the default option.KafkaMetadataStrategy: The ID generated is a concatenation of the Kafka topic, partition, and offset. For example:${topic}-${partition}-${offset}.ProvidedInKeyStrategy: The ID generated is theidfield found in the key object.ProvidedInValueStrategy: The ID generated is theidfield found in the value object. If you select this ID strategy, you must create a new field namedid. You can also use the following ksqlDB statement. The example below uses a topic namedorders.TemplateStrategy: The template string used to generate theidfield.CREATE STREAM ORDERS_STREAM WITH ( KAFKA_TOPIC = 'orders', VALUE_FORMAT = 'AVRO' ); CREATE STREAM ORDER_AUGMENTED AS SELECT ORDERID AS `id`, ORDERTIME, ITEMID, ORDERUNITS, ADDRESS FROM ORDERS_STREAM;

Note

- The connector supports

Upsertbased onid. - The connector does not support

Deletefor tombstone records.

Using the Confluent Cloud Console¶

Step 1: Launch your Confluent Cloud cluster¶

See the Quick Start for Confluent Cloud for installation instructions.

Step 2: Add a connector¶

In the left navigation menu, click Connectors. If you already have connectors in your cluster, click + Add connector.

Step 4: Enter the connector details¶

Note

- Ensure you have all your prerequisites completed.

- An asterisk ( * ) designates a required entry.

At the Add Azure Cosmos DB Sink V2 Connector screen, complete the following:

If you’ve already populated your Kafka topics, select the topics you want to connect from the Topics list.

To create a new topic, click +Add new topic.

- Select the way you want to provide Kafka Cluster credentials. You can

choose one of the following options:

- My account: This setting allows your connector to globally access everything that you have access to. With a user account, the connector uses an API key and secret to access the Kafka cluster. This option is not recommended for production.

- Service account: This setting limits the access for your connector by using a service account. This option is recommended for production.

- Use an existing API key: This setting allows you to specify an API key and a secret pair. You can use an existing pair or create a new one. This method is not recommended for production environments.

Note

Freight clusters support only service accounts for Kafka authentication.

- Click Continue.

- Enter your Cosmos DB connection details:

- Cosmos Endpoint: The Cosmos endpoint URL. For example,

https://connect-cosmosdb.documents.azure.com:443/. - Cosmos Database name: The name of your Cosmos database.

- Cosmos Connection Auth Type: Authentication details for the Cosmos endpoint.

Defaults to primary

MasterKeyand authenticate using the Cosmos DB Account Key. If you useServicePrincipal, enter the following details:- ClientID/ApplicationID: Enter the clientID/applicationID of the account.

- Client secret/password: Enter the client secret/password of the account.

- TenantID: Enter the tenantID of the account.

- Cosmos Endpoint: The Cosmos endpoint URL. For example,

- Click Continue.

Note

Configuration properties that are not shown in the Cloud Console use the default values. See Configuration Properties for all property values and definitions.

Input Kafka record value format: Select the input Kafka record value format (data coming from the Kafka topic). A valid schema must be available in Schema Registry to use a schema-based message format (for example, AVRO, JSON_SR (JSON Schema), or PROTOBUF). Defaults to AVRO.

For more information, see Schema Registry Enabled Environments.

Topic-Container map: Specify a comma-delimited list of Kafka topics mapped to Cosmos DB containers–the mapping between Kafka topics and Azure Cosmos DB containers. For example,

topic#container1,topic2#container2.Cosmos DB Item write Strategy: Defaults to ItemOverwrite. Select one the following options:

- ItemOverwrite: Involves using an upsert approach.

- ItemAppend: Involves using a create approach; ignores pre-existing items, for example, conflicts.

- ItemDelete: Deletes documents based on id/pk of the data frame.

- ItemDeleteIfNotModified: Deletes documents based on id/pk of the data frame if the etag hasn’t changed since collecting id/pk.

- ItemOverwriteIfNotModified: Involves using a create approach if etag is empty; updates/replaces with etag precondition. Otherwise, if document was updated, the precondition failure is ignored.

- ItemPatch: Partially updates all documents based on the patch configuration.

Show advanced configurations

Schema context: Select a schema context to use for this connector, if using a schema-based data format. This property defaults to the Default context, which configures the connector to use the default schema set up for Schema Registry in your Confluent Cloud environment. A schema context allows you to use separate schemas (like schema sub-registries) tied to topics in different Kafka clusters that share the same Schema Registry environment. For example, if you select a non-default context, a Source connector uses only that schema context to register a schema and a Sink connector uses only that schema context to read from. For more information about setting up a schema context, see What are schema contexts and when should you use them?.

Auto-restart policy

Enable Connector Auto-restart: Control the auto-restart behavior of the connector and its task in the event of user-actionable errors. Defaults to

true, enabling the connector to automatically restart in case of user-actionable errors. Set this property tofalseto disable auto-restart for failed connectors. In such cases, you would need to manually restart the connector.

Consumer configuration

Max poll interval(ms): Set the maximum delay between subsequent consume requests to Kafka. Use this property to improve connector performance in cases when the connector cannot send records to the sink system. The default is 300,000 milliseconds (5 minutes).

Max poll records: Set the maximum number of records to consume from Kafka in a single request. Use this property to improve connector performance in cases when the connector cannot send records to the sink system. The default is 500 records.

Schema Configuration

Value Subject Name Strategy: The method to construct the subject name used to register the value schema with the Schema Registry. Defaults to

TopicNameStrategy. Valid values areTopicNameStrategy,RecordNameStrategy, orTopicRecordNameStrategy.

Account details

The Azure environment of the Cosmos DB account: Specify the particular Azure cloud environment in which your Cosmos DB account resides. Valid values are

Azure,AzureChina,AzureUsGovernment,AzureGermany. Defaults toazure.Use gateway mode: A flag to indicate whether to use gateway mode for connecting to Cosmos DB. Defaults to

false, meaning the SDK uses direct mode. For more information, see Azure Cosmos DB connectivity modes.Preferred regions list: Use preferred regions list for a multi-region Cosmos DB account. Specify the regions in a flexible format, either as an array ([East US, West US]) or as a comma-separated list (East US, West US). Confluent recommends to use an Kafka cluster that is collocated in the same Azure region as your Cosmos DB account and use the Azure region hosting your Kafka cluster as preferred region. For more information, see Azure Cosmos DB supported regions.

Write configuration details

Enable bulk mode: A flag to indicate whether Cosmos DB bulk mode is enabled for bulk write operations on Cosmos DB. Defaults to

true.Cosmos DB Item Write Max Concurrent Cosmos Partitions: Specify this property to optimize bulk processing when the input data in each batch has been repartitioned to balance the number of Cosmos partitions to which each batch needs to write. This is mainly useful for very large containers (with hundreds of physical partitions).

If specified, it indicates that each batch contains data from, at most, this number of Cosmos physical partitions. If not specified, it will be determined based on the number of the container’s physical partitions, which suggests that each batch is expected to contain data from all Cosmos physical partitions. Defaults to

-1.Cosmos DB initial bulk micro batch size: A micro-batch is flushed to the backend when it exceeds the number of documents or the target payload size. This size automatically adjusts based on throttling rate. Defaults to

1. Reduce it to prevent initial requests from consuming too many RUs.Default Cosmos DB patch operation type: Select the default patch operation that determines how document writes are handled in Cosmos DB. Defaults to

set. Supported operation types arenone,add,set,replace,remove,increment. For more information, see Supported operations.Cosmos DB patch json property config: Explains how to configure patch operations in Cosmos DB using JSON properties. Use multiple definitions matching the following patterns, separated by commas:

property(jsonProperty).op(operationType)orproperty(jsonProperty).path(patchInCosmosDB).op(operationType). The difference in the second pattern is that it also allows you to define a different Cosmos DB path. Note that nested JSON property configurations are not supported.Conditional patch: Specify the condition under which a patch operation should be executed when using a specific SDK. For more information, see Partial document update.

Cosmos DB max retry attempts on write failures: The number of retry attempts the connector will make in the event of write failures. By default, the connector retries transient write errors up to

10times.Error tolerance level: Adjust this setting to specify how errors are handled after the specified number of retries has been exhausted. Setting the level to

Allenables the connector to log the error and then continue with its execution. Defaults toNone, indicating that the connector will not tolerate any errors after all retries are exhausted.

ID Strategy details

Id Strategy: The

IdStrategyclass name determines how to generate unique IDs for documents.FullKeyStrategy: The ID generated is the Kafka record key.KafkaMetadataStrategy: The ID generated is a concatenation of the Kafka topic, partition, and offset. For example,${topic}-${partition}-${offset}.ProvidedInKeyStrategy: The ID generated is theidfield found in the key object.ProvidedInValueStrategy: The ID generated is theidfield found in the value object.TemplateStrategy: The template string used to generate theidfield.

Throughput control details

A flag to indicate whether throughput control is enabled: A flag to indicate whether throughput control is enabled. Defaults to

false. Set this property totrueto enable the throughput control and enter the Cosmos DB account details

Processing position

Set offsets: To define a specific offset, see Manage offsets.

See Configuration Properties for all property values and definitions.

Click Continue.

Based on the number of topic partitions you select, you will be provided with a recommended number of tasks.

- To change the number of recommended tasks, enter the number of tasks for the connector to use in the Tasks field. More tasks may improve performance.

- Click Continue.

Verify the connection details.

Click Launch.

The status for the connector should go from Provisioning to Running.

Step 5: Check for records¶

Verify that records are being produced in your Azure Cosmos database.

For more information and examples to use with the Confluent Cloud API for Connect, see the Confluent Cloud API for Connect Usage Examples section.

Tip

When you launch a connector, a Dead Letter Queue topic is automatically created. See View Connector Dead Letter Queue Errors in Confluent Cloud for details.

Using the Confluent CLI¶

Complete the following steps to set up and run the connector using the Confluent CLI.

Note

Make sure you have all your prerequisites completed.

Step 1: List the available connectors¶

Enter the following command to list available connectors:

confluent connect plugin list

Step 2: List the connector configuration properties¶

Enter the following command to show the connector configuration properties:

confluent connect plugin describe <connector-plugin-name>

The command output shows the required and optional configuration properties.

Step 3: Create the connector configuration file¶

Create a JSON file that contains the connector configuration properties. The following example shows the required connector properties.

{

"name": "CosmosDbSinkV2Connector_0",

"config": {

"connector.class": "CosmosDbSinkV2",

"name": "CosmosDbSinkV2Connector_0",

"input.data.format": "AVRO",

"kafka.auth.mode": "KAFKA_API_KEY",

"kafka.api.key": "****************",

"kafka.api.secret": "**********************************************",

"topics": "pageviews",

"azure.cosmos.account.endpoint": "https://myaccount.documents.azure.com:443/",

"azure.cosmos.account.key": "****************************************",

"azure.cosmos.sink.database.name": "myDBname",

"azure.cosmos.sink.containers.topicMap": "pageviews#Container2",

"azure.cosmos.sink.id.strategy": "FullKeyStrategy",

"tasks.max": "1"

}

}

Note the following property definitions:

"connector.class": Identifies the connector plugin name."input.data.format": Sets the input Kafka record value format (data coming from the Kafka topic). Valid entries are AVRO, JSON_SR, PROTOBUF, or JSON. You must have Confluent Cloud Schema Registry configured if using a schema-based message format (for example, Avro, JSON_SR (JSON Schema), or Protobuf)."name": Sets a name for your new connector.

"kafka.auth.mode": Identifies the connector authentication mode you want to use. There are two options:SERVICE_ACCOUNTorKAFKA_API_KEY(the default). To use an API key and secret, specify the configuration propertieskafka.api.keyandkafka.api.secret, as shown in the example configuration (above). To use a service account, specify the Resource ID in the propertykafka.service.account.id=<service-account-resource-ID>. To list the available service account resource IDs, use the following command:confluent iam service-account list

For example:

confluent iam service-account list Id | Resource ID | Name | Description +---------+-------------+-------------------+------------------- 123456 | sa-l1r23m | sa-1 | Service account 1 789101 | sa-l4d56p | sa-2 | Service account 2

"azure.cosmos.account.endpoint": A URI with the formhttps://ccloud-cosmos-db-1.documents.azure.com:443/."azure.cosmos.account.key": The Azure Cosmos master key."azure.cosmos.sink.database.name": The name of your Cosmos DB."azure.cosmos.sink.containers.topicMap": A comma-delimited list of Kafka topics mapped to Cosmos DB containers. Note that this property only supports 1:1 mapping between topic and container name. For example:topic#container1,topic2#container2.(Optional)

"azure.cosmos.sink.id.strategy": Defaults toFullKeyStrategy. Enter one of the following strategies:FullKeyStrategy: The ID generated is the Kafka record key.KafkaMetadataStrategy: The ID generated is a concatenation of the Kafka topic, partition, and offset. For example:${topic}-${partition}-${offset}.ProvidedInKeyStrategy: The ID generated is theidfield found in the key object. Every record must have (lower case)idfield. This is an Azure Cosmos DB requirement. See Lower case id prerequisite.ProvidedInValueStrategy: The ID generated is theidfield found in the value object.TemplateStrategy: The template string used to generate theidfield.

Every record must have (lower case)

idfield. This is an Azure Cosmos DB requirement. See Lower case id prerequisite."tasks": The number of tasks to use with the connector. More tasks may improve performance.

Single Message Transforms: See the Single Message Transforms (SMT) documentation for details about adding SMTs using the CLI.

See Configuration Properties for all property values and descriptions.

Step 4: Load the properties file and create the connector¶

Enter the following command to load the configuration and start the connector:

confluent connect cluster create --config-file <file-name>.json

For example:

confluent connect cluster create --config-file azure-cosmos-v2-sink-config.json

Example output:

Created connector CosmosDbSinkV2Connector_0 lcc-do6vzd

Step 4: Check the connector status.¶

Enter the following command to check the connector status:

confluent connect cluster list

Example output:

ID | Name | Status | Type | Trace

+------------+-------------------------------+---------+------+-------+

lcc-do6vzd | CosmosDbSinkV2Connector_0 | RUNNING | sink | |

Step 5: Check for records¶

Verify that records are populating the endpoint.

For more information and examples to use with the Confluent Cloud API for Connect, see the Confluent Cloud API for Connect Usage Examples section.

Tip

When you launch a connector, a Dead Letter Queue topic is automatically created. See View Connector Dead Letter Queue Errors in Confluent Cloud for details.

V1 to V2 Migration¶

Confluent recommends upgrading from version 1 to version 2 of this connector to take advantage of the

latest features, including support for TemplateStrategy ID strategy.

Use the following steps to migrate to version 2 connector. Implement and validate any connector changes in a pre-production environment before promoting to production.

Important

If you plan to migrate from version 1 to version 2, set azure.cosmos.sink.id.strategy configuration property

as FullKeyStrategy to avoid any migration failures. This is also the default ID strategy.

Pause the V1 connector.

Get the offset for the V1 connector.

Create the V2 connector using the offset from the previous step.

confluent connect cluster create [flags]

For example:

Create a configuration file with connector configs and offsets.

{ "name": "(connector-name)", "config": { ... // connector specific configuration }, "offsets": [ { "partition": { ... // connector specific configuration }, "offset": { ... // connector specific configuration } } ] }Create a V2 connector in the current or specified Kafka cluster context.

confluent connect cluster create --config-file config.json

Verify the migration and confirm that the connector is running successfully with the V1 payloads.

Delete the V1 connector.

For more information on offsets, see Sink connectors.

Configuration Properties¶

Use the following configuration properties with the fully-managed connector. For self-managed connector property definitions and other details, see the connector docs in Self-managed connectors for Confluent Platform.

How should we connect to your data?¶

nameSets a name for your connector.

- Type: string

- Valid Values: A string at most 64 characters long

- Importance: high

Schema Config¶

schema.context.nameAdd a schema context name. A schema context represents an independent scope in Schema Registry. It is a separate sub-schema tied to topics in different Kafka clusters that share the same Schema Registry instance. If not used, the connector uses the default schema configured for Schema Registry in your Confluent Cloud environment.

- Type: string

- Default: default

- Importance: medium

value.subject.name.strategyDetermines how to construct the subject name under which the value schema is registered with Schema Registry.

- Type: string

- Default: TopicNameStrategy

- Valid Values: RecordNameStrategy, TopicNameStrategy, TopicRecordNameStrategy

- Importance: medium

Input messages¶

input.data.formatSets the input Kafka record value format. Valid entries are AVRO, JSON_SR, PROTOBUF, or JSON. Note that you need to have Confluent Cloud Schema Registry configured if using a schema-based message format like AVRO, JSON_SR, and PROTOBUF.

- Type: string

- Importance: high

Kafka Cluster credentials¶

kafka.auth.modeKafka Authentication mode. It can be one of KAFKA_API_KEY or SERVICE_ACCOUNT. It defaults to KAFKA_API_KEY mode.

- Type: string

- Default: KAFKA_API_KEY

- Valid Values: KAFKA_API_KEY, SERVICE_ACCOUNT

- Importance: high

kafka.api.keyKafka API Key. Required when kafka.auth.mode==KAFKA_API_KEY.

- Type: password

- Importance: high

kafka.service.account.idThe Service Account that will be used to generate the API keys to communicate with Kafka Cluster.

- Type: string

- Importance: high

kafka.api.secretSecret associated with Kafka API key. Required when kafka.auth.mode==KAFKA_API_KEY.

- Type: password

- Importance: high

Which topics do you want to get data from?¶

topicsIdentifies the topic name or a comma-separated list of topic names.

- Type: list

- Importance: high

Connect to your Azure Cosmos DB¶

azure.cosmos.account.endpointCosmos endpoint URL. For example: https://connect-cosmosdb.documents.azure.com:443/.

- Type: string

- Importance: high

azure.cosmos.sink.containers.topicMapA comma delimited list of Kafka topics mapped to Cosmos containers. For example: topic1#con1,topic2#con2.

- Type: string

- Importance: high

azure.cosmos.sink.database.nameCosmos target database to write records into.

- Type: string

- Importance: high

Account details¶

azure.cosmos.account.environmentThe azure environment of the Cosmos DB account: Azure, AzureChina, AzureUsGovernment, AzureGermany.

- Type: string

- Default: AZURE

- Valid Values: AZURE, AZURE_CHINA, AZURE_CHINA, AZURE_GERMANY, AZURE_US_GOVERNMENT

- Importance: medium

azure.cosmos.mode.gatewayFlag to indicate whether to use gateway mode. By default it is false, means SDK uses direct mode. https://learn.microsoft.com/azure/cosmos-db/nosql/sdk-connection-modes

- Type: boolean

- Default: false

- Importance: low

azure.cosmos.preferredRegionListPreferred regions list to be used for a multi region Cosmos DB account. This is a comma separated value (e.g., [East US, West US] or East US, West US) provided preferred regions will be used as hint. You should use a collocated kafka cluster with your Cosmos DB account and pass the kafka cluster region as preferred region. See list of azure regions - https://docs.microsoft.com/dotnet/api/microsoft.azure.documents.locationnames?view=azure-dotnet&preserve-view=true.

- Type: string

- Importance: low

azure.cosmos.auth.typeCosmos connection auth type

- Type: string

- Default: MasterKey

- Valid Values: MasterKey, ServicePrincipal

- Importance: high

azure.cosmos.account.keyCosmos DB account key (only required in case of auth.type as MasterKey).

- Type: password

- Importance: medium

azure.cosmos.auth.aad.clientIdThe clientId/ApplicationId of the service principal. Required for ServicePrincipal authentication.

- Type: string

- Importance: medium

azure.cosmos.auth.aad.clientSecretThe client secret/password of the service principal. Required for ServicePrincipal authentication.

- Type: password

- Importance: medium

azure.cosmos.account.tenantIdThe tenantId of the Cosmos DB account. Required for ServicePrincipal authentication.

- Type: string

- Default: “”

- Importance: medium

Consumer configuration¶

max.poll.interval.msThe maximum delay between subsequent consume requests to Kafka. This configuration property may be used to improve the performance of the connector, if the connector cannot send records to the sink system. Defaults to 300000 milliseconds (5 minutes).

- Type: long

- Default: 300000 (5 minutes)

- Valid Values: [60000,…,1800000] for non-dedicated clusters and [60000,…] for dedicated clusters

- Importance: low

max.poll.recordsThe maximum number of records to consume from Kafka in a single request. This configuration property may be used to improve the performance of the connector, if the connector cannot send records to the sink system. Defaults to 500 records.

- Type: long

- Default: 500

- Valid Values: [1,…,500] for non-dedicated clusters and [1,…] for dedicated clusters

- Importance: low

Number of tasks for this connector¶

tasks.maxMaximum number of tasks for the connector.

- Type: int

- Valid Values: [1,…]

- Importance: high

Write configuration details¶

azure.cosmos.sink.bulk.enabledFlag to indicate whether Cosmos DB bulk mode is enabled for Sink connector. By default it is true.

- Type: boolean

- Default: true

- Importance: medium

azure.cosmos.sink.bulk.maxConcurrentCosmosPartitionsCosmos DB item write max concurrent cosmos partitions. If not specified it will be determined based on the number of the container’s physical partitions - which would indicate every batch is expected to have data from all Cosmos physical partitions. If specified it indicates from at most how many Cosmos Physical Partitions each batch contains data. So this config can be used to make bulk processing more efficient when input data in each batch has been repartitioned to balance to how many Cosmos partitions each batch needs to write. This is mainly useful for very large containers (with hundreds of physical partitions).

- Type: int

- Default: -1

- Importance: low

azure.cosmos.sink.bulk.initialBatchSizeCosmos DB initial bulk micro batch size - a micro batch will be flushed to the backend when the number of documents enqueued exceeds this size - or the target payload size is met. The micro batch size is getting automatically tuned based on the throttling rate. By default the initial micro batch size is 1. Reduce this when you want to avoid that the first few requests consume too many RUs.

- Type: int

- Default: 1

- Importance: medium

azure.cosmos.sink.write.strategyCosmos DB item write strategy: ItemOverwrite (using upsert), ItemAppend (using create, ignore pre-existing items i.e., Conflicts), ItemDelete (deletes based on id/pk of data frame), ItemDeleteIfNotModified (deletes based on id/pk of data frame if etag hasn’t changed since collecting id/pk), ItemOverwriteIfNotModified (using create if etag is empty, update/replace with etag pre-condition otherwise, if document was updated the pre-condition failure is ignored), ItemPatch (Partial update all documents based on the patch config)

- Type: string

- Default: ItemOverwrite

- Valid Values: ItemAppend, ItemDelete, ItemDeleteIfNotModified, ItemOverwrite, ItemOverwriteIfNotModified, ItemPatch

- Importance: high

azure.cosmos.sink.write.patch.operationType.defaultDefault Cosmos DB patch operation type. Supported ones include none, add, set, replace, remove, increment. Choose none for no-op, for others please reference - https://docs.microsoft.com/azure/cosmos-db/partial-document-update#supported-operations for full context.

- Type: string

- Default: Set

- Valid Values: Add, Increment, None, Remove, Replace, Set

- Importance: low

azure.cosmos.sink.write.patch.property.configsCosmos DB patch json property configs. It can contain multiple definitions matching the following patterns separated by comma. property(jsonProperty).op(operationType) or property(jsonProperty).path(patchInCosmosdb).op(operationType) - The difference of the second pattern is that it also allows you to define a different cosmosdb path. Note: It does not support nested json property config.

- Type: string

- Importance: low

azure.cosmos.sink.write.patch.filterUsed for Conditional patch. Ref - https://docs.microsoft.com/azure/cosmos-db/partial-document-update-getting-started#java

- Type: string

- Importance: low

azure.cosmos.sink.maxRetryCountCosmos DB max retry attempts on write failures. By default, the connector will retry on transient write errors for up to 10 times.

- Type: int

- Default: 10

- Importance: medium

azure.cosmos.sink.errors.tolerance.levelError tolerance level after exhausting all retries. None for fail on error. All for log and continue.

- Type: string

- Default: None

- Valid Values: All, None

- Importance: high

ID Strategy details¶

azure.cosmos.sink.id.strategyThe IdStrategy class name to use for generating a unique document id (id). FullKeyStrategy uses the full record key as ID. KafkaMetadataStrategy uses a concatenation of the kafka topic, partition, and offset as ID, with dashes as separator. i.e. ${topic}-${partition}-${offset}. ProvidedInKeyStrategy and ProvidedInValueStrategy use the id field found in the key and value objects respectively as ID.

- Type: string

- Default: FullKeyStrategy

- Valid Values: FullKeyStrategy, KafkaMetadataStrategy, ProvidedInKeyStrategy, ProvidedInValueStrategy, TemplateStrategy

- Importance: high

Throughput control details¶

azure.cosmos.throughputControl.enabledA flag to indicate whether throughput control is enabled.

- Type: boolean

- Default: false

- Importance: medium

azure.cosmos.throughputControl.auth.typeThere are two auth types are supported currently: MasterKey`(PrimaryReadWriteKeys, SecondReadWriteKeys, PrimaryReadOnlyKeys, SecondReadWriteKeys), `ServicePrincipal

- Type: string

- Default: MasterKey

- Valid Values: MasterKey, ServicePrincipal

- Importance: low

azure.cosmos.throughputControl.account.keyCosmos DB throughput control account key (only required in case of throughputControl.auth.type as MasterKey)

- Type: password

- Importance: low

azure.cosmos.throughputControl.auth.aad.clientIdThe clientId/applicationId of the service principal. Required for ServicePrincipal authentication.

- Type: string

- Importance: low

azure.cosmos.throughputControl.auth.aad.clientSecretThe client secret/password of the service principal. Required for ServicePrincipal authentication.

- Type: password

- Importance: low

azure.cosmos.throughputControl.account.tenantIdThe tenantId of the Cosmos DB account. Required for ServicePrincipal authentication.

- Type: string

- Importance: low

azure.cosmos.throughputControl.account.environmentThe azure environment of the Cosmos DB account: Azure, AzureChina, AzureUsGovernment, AzureGermany.

- Type: string

- Default: AZURE

- Valid Values: AZURE, AZURE_CHINA, AZURE_GERMANY, AZURE_US_GOVERNMENT

- Importance: low

azure.cosmos.throughputControl.account.endpointCosmos DB throughput control account endpoint uri.

- Type: string

- Importance: low

azure.cosmos.throughputControl.mode.gatewayFlag to indicate whether to use gateway mode. By default it is false, means SDK uses direct mode. https://learn.microsoft.com/azure/cosmos-db/nosql/sdk-connection-modes

- Type: boolean

- Default: false

- Importance: low

azure.cosmos.throughputControl.preferredRegionListPreferred regions list to be used for a multi region Cosmos DB account. This is a comma separated value (e.g., [East US, West US] or East US, West US) provided preferred regions will be used as hint. You should use a collocated kafka cluster with your Cosmos DB account and pass the kafka cluster region as preferred region. See list of azure regions - https://docs.microsoft.com/dotnet/api/microsoft.azure.documents.locationnames?view=azure-dotnet&preserve-view=true

- Type: string

- Importance: low

azure.cosmos.throughputControl.group.nameThroughput control group name. Since customer is allowed to create many groups for a container, the name should be unique.

- Type: string

- Importance: medium

azure.cosmos.throughputControl.targetThroughputThroughput control group target throughput. The value should be larger than 0.

- Type: int

- Valid Values: [1,…]

- Importance: medium

azure.cosmos.throughputControl.targetThroughputThresholdThroughput control group target throughput threshold. The value should be between (0,1].

- Type: double

- Importance: medium

azure.cosmos.throughputControl.priorityLevelThroughput control group priority level. The value can be None, High or Low.

- Type: string

- Default: None

- Valid Values: High, Low, None

- Importance: medium

azure.cosmos.throughputControl.globalControl.database.nameDatabase which will be used for throughput global control.

- Type: string

- Importance: medium

azure.cosmos.throughputControl.globalControl.container.nameContainer which will be used for throughput global control.

- Type: string

- Importance: medium

azure.cosmos.throughputControl.globalControl.renewIntervalInMSThis controls how often the client is going to update the throughput usage of itself and adjust its own throughput share based on the throughput usage of other clients. Default is 5s, the allowed min value is 5s.

- Type: int

- Default: 5000

- Valid Values: [5000,…]

- Importance: low

azure.cosmos.throughputControl.globalControl.expireIntervalInMSThis controls how quickly we will detect the client has been offline and hence allow its throughput share to be taken by other clients. Default is 11s, the allowed min value is 2 * renewIntervalInMS + 1

- Type: int

- Importance: low

Next Steps¶

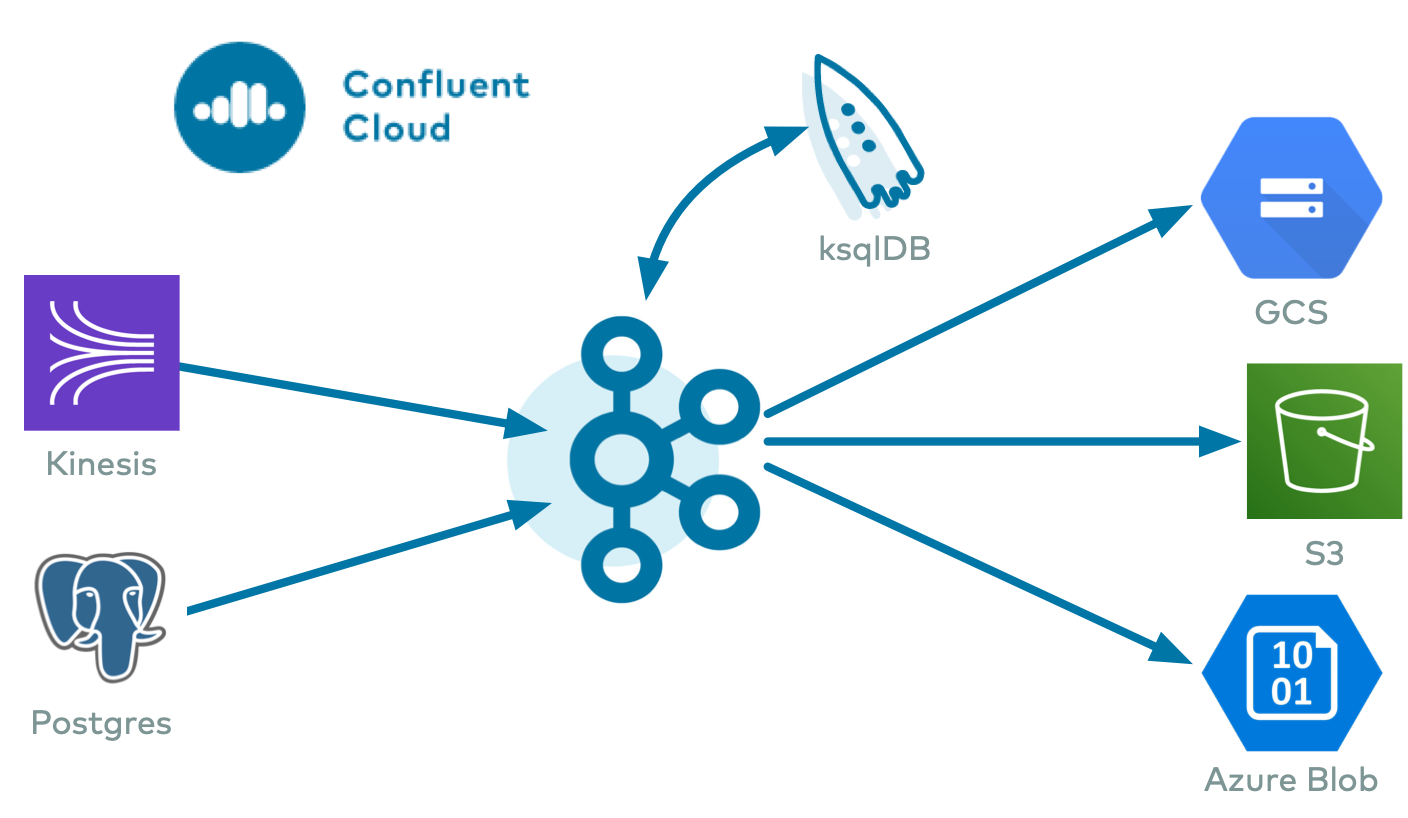

For an example that shows fully-managed Confluent Cloud connectors in action with Confluent Cloud ksqlDB, see the Cloud ETL Demo. This example also shows how to use Confluent CLI to manage your resources in Confluent Cloud.